Your app rating directly impacts ranking & downloads

Three wins from fixing your rating: stronger category rank, more organic downloads and cheaper paid acquisition through better store-page conversion.

Most app teams know ratings matter. Far fewer treat ratings as the growth lever they really are.

Using U.S. App Store metadata from APPlyzer covering 17,128 apps, plus category-level rank-to-download curves, a clear pattern emerges: better ratings are associated with better chart performance, meaningfully more organic downloads and stronger commercial efficiency for paid acquisition.

The important point is not that rating is the only growth driver. Rankings are shaped by brand strength, installs, conversion, retention, monetisation, merchandising and seasonality. But the data does suggest that ratings behave like a gate at the top end of the chart. In practical terms, low ratings rarely coexist with elite positions. And once a rating improves, the upside shows up in three places.

Win 1: better ratings are strongly associated with better category rank

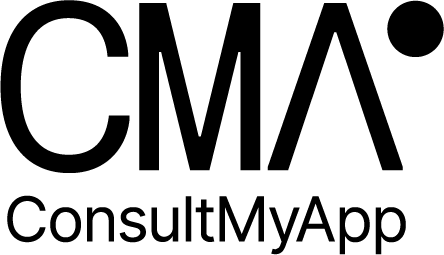

At a raw correlation level, the rating-to-rank relationship looks modest because so many other variables influence chart position. But once you look at the top of the chart, the signal becomes much clearer.

• 94% of top-10 apps in this sample are rated 4.5+

• 99% of top-10 apps are rated 4.0+

• 83% of top-100 apps are rated 4.5+

That is why the best way to describe app rating is not as a silver bullet. It is closer to a qualifying condition. A low-rated app can still exist in the charts, but the very highest positions are overwhelmingly populated by apps users already trust.

Using smoothed rating bands from the dataset, the median category-rank relationship looks roughly like this: around 4.0★: rank ~292, around 4.6★: rank ~274, and around 4.8★: rank ~243.

Put differently, within the core 4.0 to 4.9 range, each additional +0.1 stars is associated with roughly 4.7 better chart positions on average in this dataset.

That matters because ranking gains compound. Moving from the high 200s into the mid 200s is not just a cosmetic improvement. It shifts the amount of chart visibility your app receives and changes the volume of users who discover you organically.

The threshold view makes the same point from a different angle. Apps rated 4.7 to 5.0 are materially more likely to sit in the top 100 than apps rated below 4.0. So while the scatter plot looks noisy, the commercial takeaway is clean: high ratings and high chart positions travel together far more often than low ratings and high chart positions do.

Win 2: better rankings convert into more organic downloads

The second win is where the argument becomes especially useful commercially.

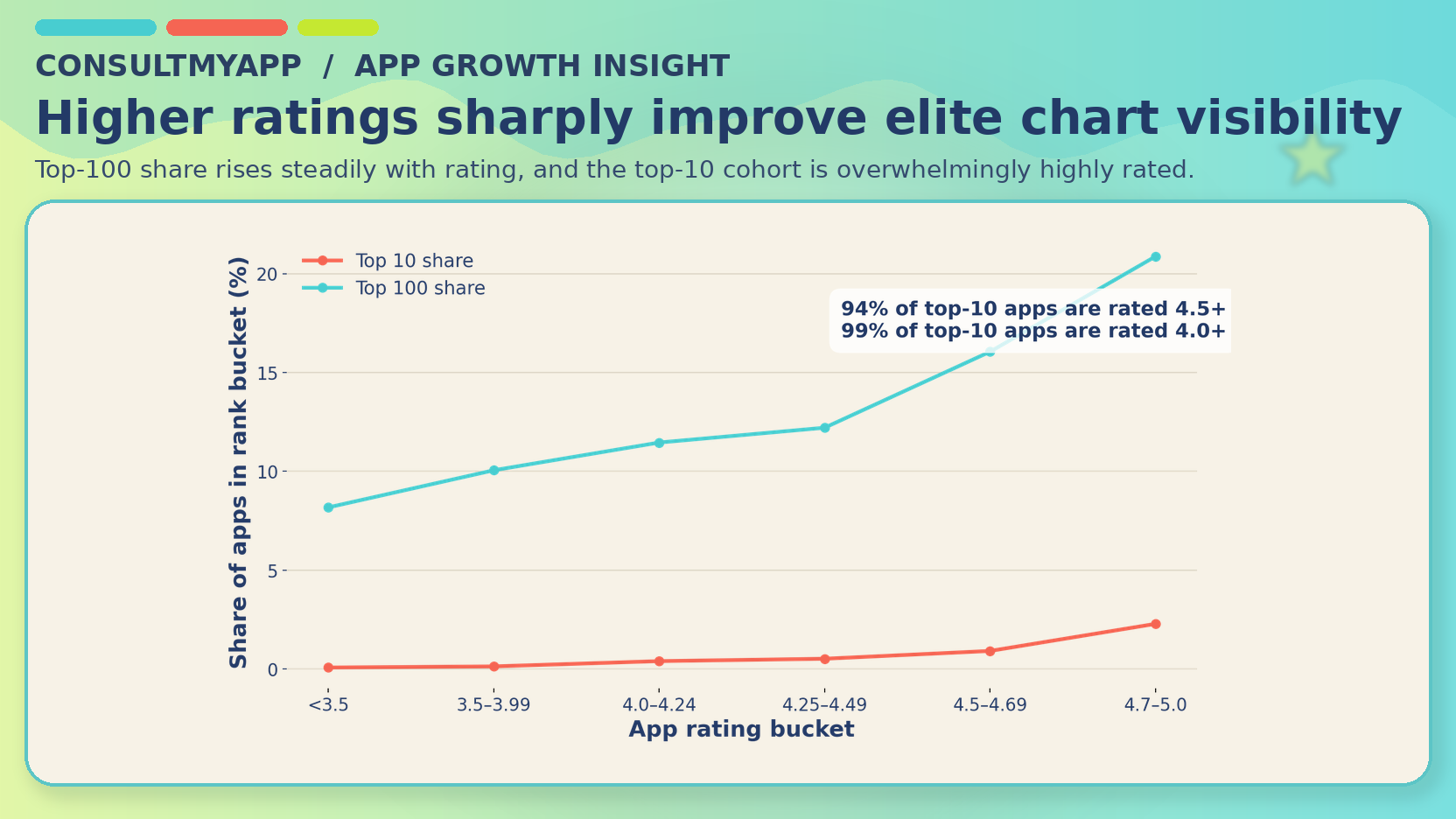

From the earlier rating-to-rank analysis, we already knew that improving rating tends to move apps up the chart. The additional file supplied here then maps those chart positions to estimated daily organic downloads by category. That lets us move from “better rating is associated with better rank” to “better rating is associated with more organic volume”.

Across the core non-game categories, the median daily uplift looks like this: 4.0★ → 4.6★: +22 daily organic downloads; 4.6★ → 4.8★: +57; 4.0★ → 4.8★: +72.

That last number is the one most growth teams should care about. A move from roughly 4.0 to 4.8 stars is associated here with about 72 extra organic downloads per day on the median core non-game category curve. Annualized, that is roughly 26,000 extra organic downloads per year.

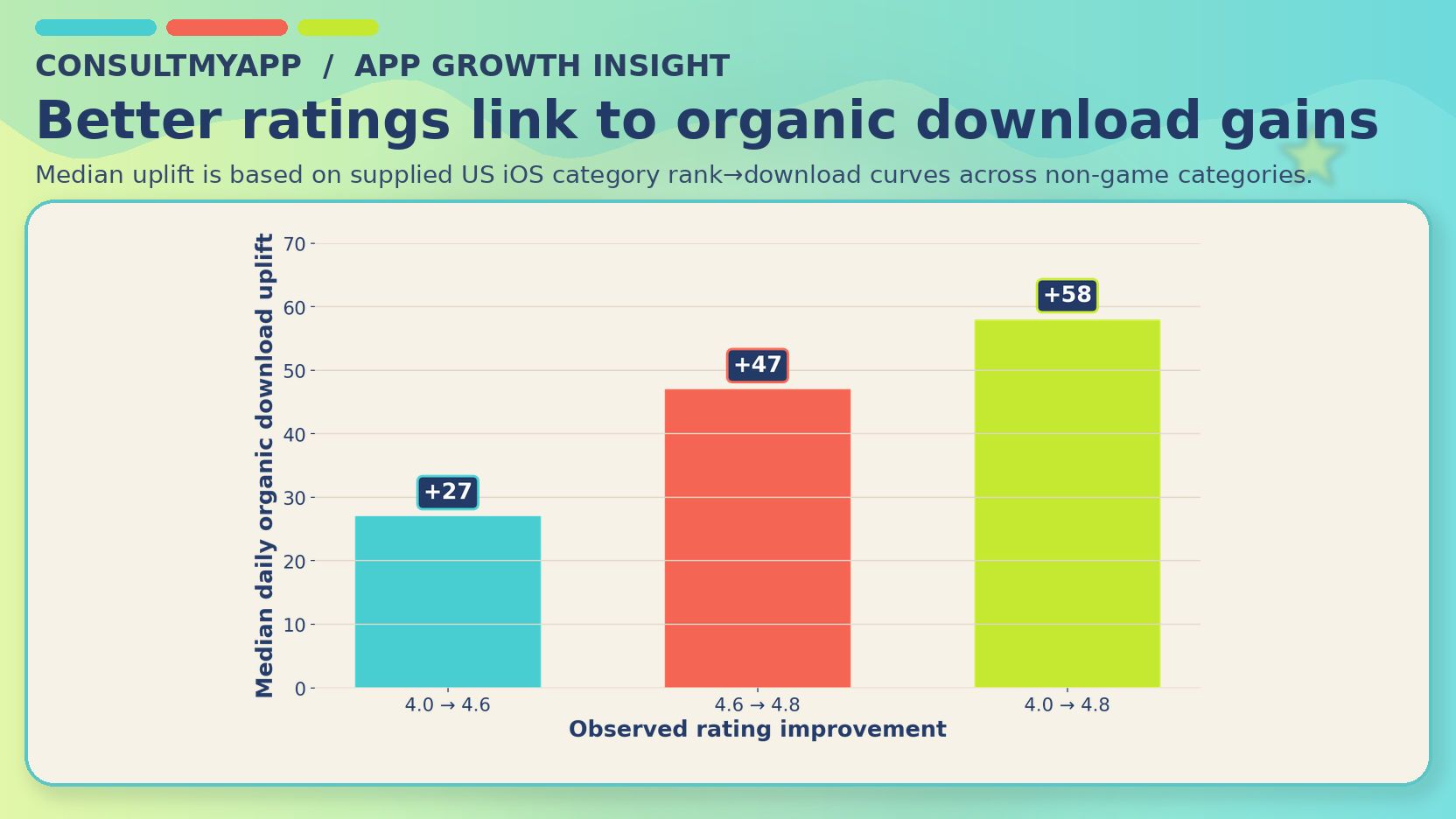

The exact value varies by category, and some category curves are noisier than others, but the direction is usually positive. In several categories the upside is particularly meaningful: Shopping +313, Medical +248, Weather +179, Sports +145, and Photo & Video +140.

This is the point many teams underweight. Ratings do not just change how your app looks in the store. They change where you sit in the store, and where you sit in the store changes how much free demand you can capture. That is why rating work should not be treated as brand hygiene or community management alone. It is better thought of as an organic acquisition lever.

Win 3: better ratings should improve conversion and make paid cheaper too

The third win is not a direct output of the metadata dataset, so it is important to be precise about what follows. This part is a commercial inference rather than a measured variable in the file.

When paid traffic lands on your App Store page, star rating is one of the first trust signals a user sees. A stronger rating reassures. A weaker rating creates hesitation. That matters because your paid efficiency is not determined only by media buying. It is also determined by what happens when a user arrives on the store page.

In practical terms, the chain works like this: you pay to generate a tap; the user lands on your store page; the rating helps determine whether that tap converts into an install; and better conversion means more installs from the same media spend. More installs from the same spend means lower CPI and, downstream, lower CPA.

So even if a rating initiative delivered no chart benefit at all, it would still matter for paid because it improves the efficiency of traffic you are already buying. In reality, the more interesting point is that ratings can help on both sides at once: organic visibility and paid conversion.

The real takeaway: if your rating is weak, you have a growth problem

The temptation is to think about ratings as reputation. The data suggests they should be thought about more broadly than that.

A weak rating is often doing three separate jobs against you at the same time: limiting your ability to sit in stronger chart positions, suppressing the amount of organic demand you capture, and reducing the conversion efficiency of paid traffic landing on the store page.

That is why rating improvement work deserves more senior attention than it usually gets. It is not merely a UX clean-up project or a support-team KPI. It is a growth initiative.

What app teams should do next

In many cases, the apps themselves aren’t intrinsically bad - they suffer from poor rating/review prompt design.

The most effective programmes typically combine product issue triage, review mining, smart prompt timing, country and platform monitoring, response and resolution loops, and ongoing ASO and paid alignment so any rating gains are fully exploited commercially.

In other words, the prize is not just a prettier number beside your brand name. The prize is a stronger acquisition engine.

CMA have spent years mastering the correct prompt, creative, trigger and segmentation so are able to quickly jump into any app and resolve rating & review problems (usually within a matter of weeks). See our case study page for examples of how we’ve done this for many of our happy clients!

Final thought

If your app rating is sitting around 4.0, this dataset suggests that getting it closer to 4.8 is not a marginal win.

It is associated with materially better category rank, materially more organic downloads and a stronger conversion environment for paid traffic. That is a rare combination. Most levers improve one part of the funnel. Ratings can improve three.

For teams serious about efficient growth, that makes app rating one of the most underrated performance levers in mobile marketing - reach out to us today and we’ll happily walk you through how we can improve rating & reviews in a matter of weeks for your app.